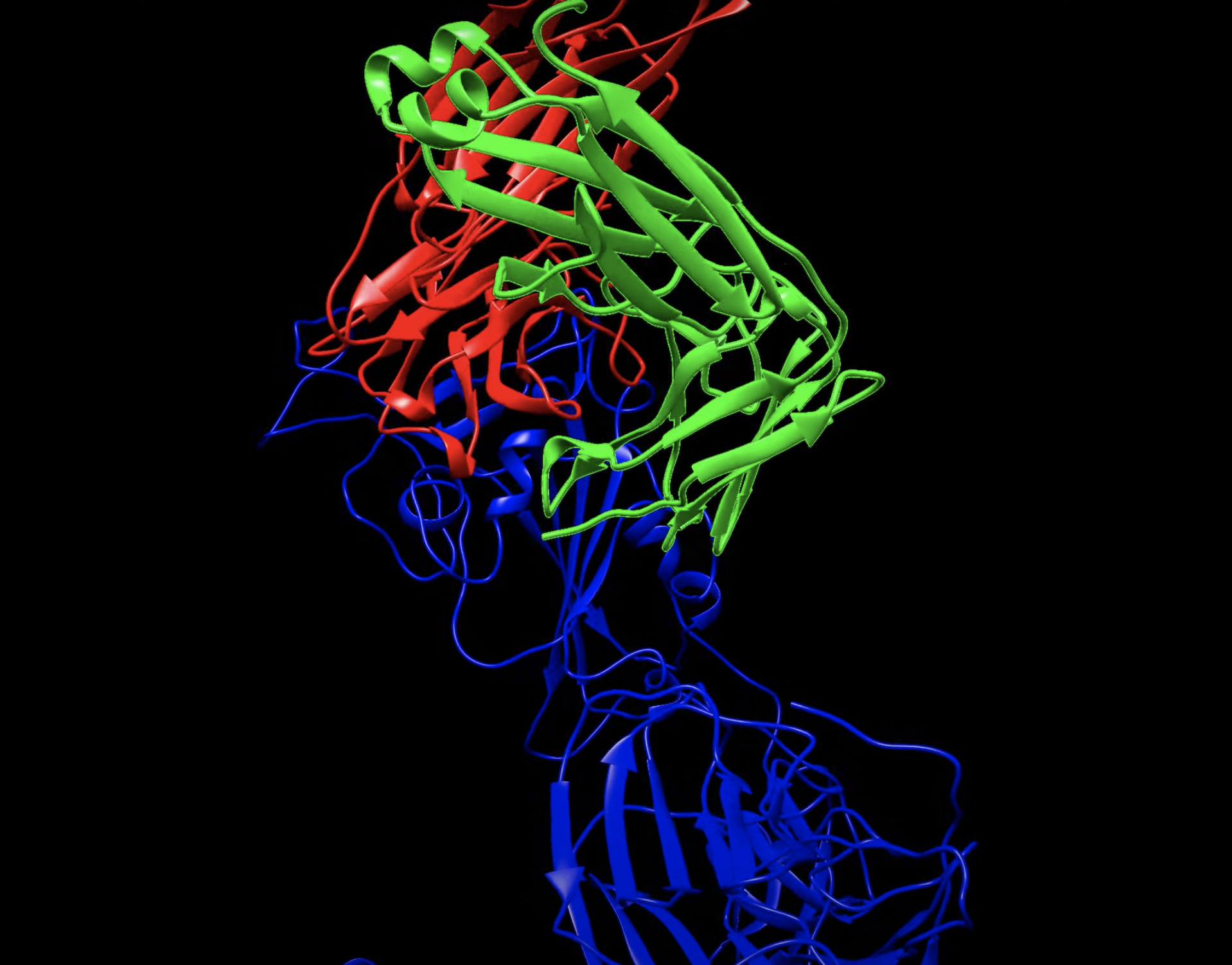

As the COVID-19 pandemic began taking hold in early 2020, Jeremy Smith of Oak Ridge National Laboratory (ORNL) and the University of Tennessee, Knoxville, (UTK) emailed his team about working on the problem. With SARS-CoV-2 genome sequence data, Smith’s team could use computational strategies to seek drug molecules that bind to the virus’ exterior spike protein and potentially prevent infection.

Postdoctoral researcher Micholas Smith (no relation) took on the challenge and soon performed docking calculations – initial computational screenings of 8,000 compounds against this target. In mid-February, the team reported 77 molecules that showed enhanced binding to the spike protein based on their screens, among the first done for COVID-19 antiviral therapies.

Over the pandemic’s course, the team has accelerated its docking calculations by 20-fold, screening a billion compounds against a single disease target in 15 hours – a process that once took two weeks. Collaborators, such as Colleen Johnson at the University of Tennessee Health Science Center in Memphis, and a pharmaceutical company, Berg Health, have tested promising compounds in the laboratory. Several of those initial computational hits also have bound tightly to viral proteins in laboratory experiments, and some compounds that showed up in their screens are now in clinical trials.

The ORNL team’s initial work was an early demonstration of how the Department of Energy’s (DOE’s) computational science resources could help fight COVID-19, building on supercomputing investments and projects that had focused on finding drugs to treat cancer other diseases. This groundwork led DOE to form, with the White House Office of Science and Technology Policy and IBM, the COVID-19 High Performance Computing (HPC) Consortium. The consortium has linked researchers worldwide with 600 petaflops across 43 HPC systems, resulting in more than 100 projects.

“Drugs work by interacting with proteins,” says Marti Head, director of ORNL’s and UTK’s Joint Institute for Biological Sciences. “They bind to part of a protein, and that effect percolates through the biological system.” To pivot toward COVID-19, researchers needed to shift focus from cancer to viral-protein targets and devise computational predictions that biological experiments could validate quickly. With the looming emergency, getting results as efficiently as possible became a top priority.

Labs began organizing and collaborating in those early months. Those activities took off with CARES Act funding for DOE’s National Virtual Biotechnology Laboratory, which guided representatives from all 17 national laboratories toward a series of projects. One of those efforts – molecular design – has searched for antibodies, nanobodies and small-molecule drugs that can target all stages of the virus’s life cycle. It involves researchers from nine of the national labs.

Combating a virus involves a delicate balance: shutting down the infectious agent while protecting the host.

Developing drugs to fight any disease is daunting. On average it takes up to 15 years and more than $2 billion to develop and test a successful drug. Even when molecules show promise in the laboratory and advance to clinical testing, more than 90 percent fail because they aren’t effective in humans or cause toxic side effects. Although coronavirus vaccines are now available, antiviral drugs remain important to help infected patients and as an added line of defense against rapidly evolving variants.

Computation offers the prospect of accelerating drug discovery and lowering costs. Computers can’t eliminate the need for laboratory experiments and clinical trials, but with initial screens, they can significantly narrow the initial pool of drug candidates, saving the experimental and clinical research steps for the most promising candidates. The initial stages of discovery research often are described as a funnel, says Shantenu Jha of Brookhaven National Laboratory and Rutgers University. “We start with many bazillions of (molecules), and you want to come out at the other end with a much more selective, higher quality, refined set of drug candidates.”

DOE’s main experience with early-stage drug development was in 2016’s Cancer Moonshot. program, an initiative that HPC to medicine and supported collaborations among DOE, the National Institutes of Health (NIH), academia and industry. For example, The Joint Design of Advanced Computing Solutions for Cancer (JDACS4C) Project brought four national labs – Argonne, ORNL, Lawrence Livermore and Los Alamos – into partnership with the NIH’s National Cancer Institute to apply HPC and artificial intelligence to cancer research. This collaboration got a boost from the DOE Exascale Computing Project’s efforts to build a scalable deep neural network code called CANDLE, for CANcer Distributed Learning Environment. The collaboration also led to a public-private partnership through the Accelerating Therapeutics for Opportunities in Medicine (ATOM) consortium that linked researchers from Lawrence Livermore, pharmaceutical company GSK, NCI’s Frederick National Laboratory for Cancer Research and the University of California, San Francisco. Earlier this year, ATOM announced that Argonne, Brookhaven and ORNL were joining. Many of these researchers have focused on COVID-19 over the past year.

To replicate, viruses must infect host cells and hijack their components. Therefore, combating a virus involves a delicate balance: shutting down the infectious agent while protecting the host. Research on HIV, Ebola and other viruses has shown that targeting various parts of the virus life cycle is critical, and NVBL researchers have been screening molecules against nearly a dozen proteins involved from initial infection to virus replication to release. Researchers will validate promising computational results with biochemical assays.

Molecular dynamics (MD) simulation software is an important part of computational drug screening for modeling how a target interacts with a drug. For example, in 2019 Jha, Argonne’s Arvind Ramanathan and their team released DeepDriveMD, software that helped improve protein MD simulations. (See sidebar: “Going deep on COVID’s spike.”) Researchers get critical information about proteins’ structures from experiments, but most of those techniques provide a single snapshot of a molecule instead of constant movements and rotations around chemical bonds.

MD algorithms solve Newton’s laws of motion for every particle in a system, Ramanathan says. They calculate energies of atoms as they interact with each other, and those numbers predict arrangements’ relative likelihood. To get meaningful answers, such simulations must stitch together billions, sometimes trillions, of snapshots. Each represents roughly one quadrillionth of a second, and to model meaningful interactions, modelers must assemble a series of images showing complex motions over seconds to minutes.

Machine learning can help analyze the large volumes of data generated, says ORNL’s Debsindhu Bhowmik, a team member. Overall, it helps researchers manage calculations’ size and complexity. Machine learning reduces data’s dimensions, simplifying a chemical structure or its description to a couple dozen key numerical features.

A three-step screen process can winnow 10 million molecules to dozens in a single 24-hour run on half of Summit.

As a molecular systems’ atoms increase, the computational resources needed for simulations balloons dramatically. And proteins include many bonds that can rotate, altering the shape and energies of pockets and crevices where molecules can bind.

Plus, to find interesting biological events, researchers must sample many different pathways and accumulate enough snapshots of them. DeepDriveMD prevents simulations from bogging down in a low-energy well and substantially accelerates event-sampling.

Working on COVID-19 pushed the team to focus on the best possible results and the most efficient use of computational resources. Collaborators developed a three-step workflow, Jha says, starting with docking – a quick assessment of a molecule’s and protein’s binding ability. Then they’d use DeepDriveMD to refine their binding simulations. After identifying the most promising candidates, they’d calculate binding energies, incorporating detailed physics of the interactions.

That three-step screen process can winnow 10 million molecules to dozens in a single 24-hour run on half of Summit, the IBM AC922 system at the Oak Ridge Leadership Computing Facility. That’s a profound change, Jha says, “inconceivable a few years ago” in scale and calculational complexity.

Felice Lightstone of Livermore and her colleagues also have contributed computational tools to the NVBL molecular therapeutics work, screening small-molecule libraries and designing antibodies along with validation experiments. Initial screening included libraries of molecules already used to treat other conditions – ones already safety tested, thus reducing later rounds of trials. But new drugs will be important, too. Using Livermore’s Sierra supercomputer, they’ve improved machine-learning models’ speed and precision for finding novel drug compounds. In a project that was a 2020 finalist for the Gordon Bell Special Prize for High Performance Computing-Based COVID-19 Research, the team trained its model on 1.6 billion small molecules and a million other compounds. It reduced model training time from a full day to 23 minutes.

Through the ATOM project, the Livermore group also developed tools that scan for possible toxic side effects. Collaborating with the American Heart Association and others, for example, the group created a library of compounds that interfere with proteins crucial to properly functioning hearts, brains and other vital organs, Lightstone says, enabling the team to “selectively screen through and see if we see any bad actors.”

To date, the NVBL has identified more than 2,000 compounds that have now been assayed against COVID-19 drug targets. Of those, 56 molecules inhibited SARS-CoV-2 infection and are under further study.

This massive effort to screen compounds that target something as complex as the coronavirus has been unprecedented in speed and scale, Argonne’s Ramanathan says. And, he says, it offers a model for other urgent large-scale projects.

To which ORNL’s Head adds, “We can’t allow that long-term investment to slip.” Even with vaccines, continued work on antiviral drugs for COVID-19 remains important, to treat people who become infected and manage new viral variants. After all, it was earlier research on coronaviruses – the 2003 SARS and 2012 MERS outbreaks – that provided critical structural knowledge that helped researchers get a head start when the pandemic emerged.

Likewise, lessons from today will help scientists understand other coronaviruses and will be useful for fighting other diseases such as influenza, Zika or West Nile. “For the future,” Head asks, “how do you contextualize what we’ve been learning for this virus in the bigger picture so that we’re more ready to respond next time?”

This past year also highlights how computers, data and health can unite to address big challenges in medicine, ORNL’s Jeremy Smith says. “The pandemic has given impetus to the idea that powerful computing can be a useful tool in combatting diseases. People are cognizant of that now, and it’s exciting to see where that’s going to go.”