It’s impossible, of course, to bring the outdoors into a laboratory for experiments. That’s why scientists rely on computer models to predict long-range climate change.

But those projections are only as good as the models producing them. In the early 1990s, CHAMMP – Computer Hardware, Advanced Mathematics and Model Physics – improved them both.

Started by the Department of Energy’s Office of Biological and Environmental Research, CHAMMP was designed to rapidly advance the science of long-range climate prediction.

With additional support from DOE’s Office of Advanced Scientific Computing Research (ASCR), CHAMMP brought climate modeling into the world of high-performance computing. It put models on the latest parallel computers, which divide complex problems among multiple processors for faster solutions.

CHAMMP “has the legacy of bringing DOE lab experts on computational fluid dynamics (CFD), numerical methods, and computer science into the climate modeling community,” says David Bader of DOE’s Lawrence Livermore National Laboratory. Bader directed CHAMMP and now is chief scientist for its successor, the Climate Change Prediction Program.

CFD models fluid flow, such as air movement. Numerical methods convert complex phenomena into algorithms – mathematical recipes – computers use to simulate those phenomena.

In the early 1990s, CHAMMP helped develop a leading U.S. climate representation, the Parallel Climate Model or PCM, one of two predecessors to the current Community Climate System Model, Version 3 (CCSM).

The Intergovernmental Panel on Climate Change considered data from the CCSM and other models before issuing its April 2007 report confirming global warming.

CCSM is still a leading model – and it’s about to get better. DOE’s Argonne National Laboratory is testing it to run with unprecedented detail on the IBM Blue Gene/L, one of the world’s most powerful computers.

That step is traceable to CHAMMP.

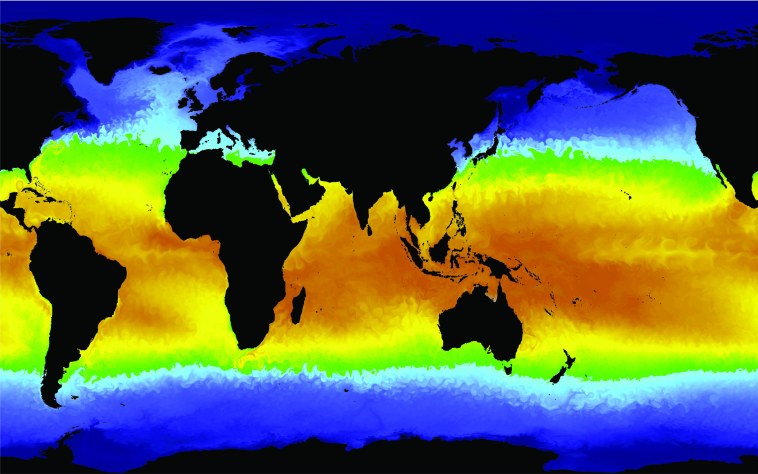

A run of the Parallel Ocean Program developed through the CHAMMP program generated this image of a global sea surface temperature field. Red and yellow colors are warmer temperatures; green and blue are cooler. Courtesy of Julie McClean, Lawrence Livermore National Laboratory.

“CHAMMP was just one piece of DOE’s modeling program,” Bader says. It “was the model development part, which was new because DOE hadn’t done that.”

One goal, Bader says, was to adapt models to run on ASCR-supported high-performance parallel computers then coming on line. Among them were:

- The Thinking Machines CM2 and CM5, installed in 1989 and 1992, respectively, at Los Alamos National Laboratory.

- The Cray C90 installed at DOE’s National Energy Research Scientific Computing Center (NERSC) in 1991 and at the National Science Foundation’s National Center for Atmospheric Research (NCAR) in 1996.

- The Intel Paragon installed at Oak Ridge National Laboratory in 1992.

- The IBM SP1 and SP2. Argonne installed the SP1 in 1993 and upgraded it to an SP2 in 1995. Oak Ridge took delivery on an SP2 in 1994.

- The Cray T3D installed at NCAR in 1994 and Cray T3E installed at the NERSC Center in 1996.

Considered cutting-edge at the time, each of the computers is puny by today’s standards, when parallel machines often have thousands of processors.

Researchers John Drake at Oak Ridge, Ian Foster at Argonne, and James Hack and Dave Williamson at NCAR developed the parallel computing version of NCAR’s Community Climate Model, an existing atmospheric General Circulation Model, or GCM.

With support from the Office of Biological and Environmental Research, DOE researchers John Dukowicz, Bob Malone and Rick Smith at Los Alamos reworked existing ocean General Circulation Model algorithms developed by the Naval Postgraduate School’s Bert Semtner. The result: the Parallel Ocean Program (POP), which still is the CCSM’s ocean component. The atmosphere and ocean modeling teams later worked with Warren Washington’s NCAR group to couple these components into the DOE Parallel Climate Model. It was the first time atmospheric and oceanic models were coupled in a massively parallel computing environment, Bader says.

A close-up of the Parallel Ocean Program global sea surface temperature field image (see previous image) shows the simulation’s eddy detail.

CHAMMP was so successful, it may have put itself out of business. Bader says it had met its objectives after six or seven years.

“We had tried out new computer architectures, had developed a state-of-the-art coupled climate model to use those architectures and be portable between them, and Warren Washington had taken those and applied it to climate research,” he adds. DOE’s Climate Change Prediction Program absorbed CHAMMP.

Nonetheless, CHAMMP was a vital part of the joint DOE-National Science Foundation effort to build an effective climate model, Bader says: “It’s a part of some of the blocks of the foundation for the CCSM.”

With the ability to run on massively parallel computers, the CCSM is a leading general circulation climate model. The code is available to anyone, enabling climate research around the world.

ASCR and Biological and Environmental Research still support numerical aspects of model development, including scaling them to run on today’s huge parallel computers. The latest versions use the Model Coupling Toolkit, developed at Argonne, to build the parallel CCSM from individual ocean, ice and land models.

The Scientific Discovery through Advanced Computing (SciDAC) program, sponsored by DOE’s Office of Science, supports work to revamp the CCSM so it more accurately couples greenhouse gas emissions and other factors.

Those improvements, and improvements in other models, make climate predictions more accurate than ever, Bader says. Because of that, “the human effect on climate has been documented so that it’s almost irrefutable.”